In these divisive times, I’m noticing two loud camps around AI.

Some are convinced it will take over the world. Others are sprinting to harness it so they can rule the world.

Whichever camp you’re closer to, one thing is clear: our world is getting more AI‑dense. This piece is about what remains stubbornly, beautifully human in that world.

Meaning‑Making / Purpose → Goals

It starts with meaning‑making.

It will always take a human to connect the dots between a feature, a workflow, a policy - and someone’s lived experience. As a leader, you tell the story of why this matters now, for these people, in this context.

AI might help you with options and trade‑offs, with market opportunity and metrics, with historical patterns and success stories. But it’s a human who stands up and declares a meaning that resonates with other humans - as customers, as partners, as employees, as investors.

When your meaning‑making lands, goals stop being distant targets and start becoming lived commitments addressing specific questions:

Who are we serving?

What changes for them if we succeed?

How will we know it’s actually better, not just busier?

AI can help you forecast, cluster, and optimize. It cannot decide what is worth optimizing for. That’s you.

Value‑Aligned Judgment → Purpose

It takes a human to practice value‑aligned judgment. And in your world - whatever your role, level, or status - that human is you.

Tools can surface patterns and options. They can rank, score, and recommend. But they can’t sit with the hard trade‑offs and still make a call you’re genuinely proud to own.

This is where purpose moves from a poster on the wall to something you practice in real time:

You say ‘no’ to the elegant solution that quietly erodes trust.

You slow down the rollout because the impact on one vulnerable group isn’t yet understood.

You own the decision, even when the model “suggested” a different path.

Purpose, in this sense, is your moral compass and the spine of the system. It’s the throughline that lets people trust that your “yes” and your “no” are anchored in more than convenience, pressure, or short‑term gain.

Bold Aspirations, Clear Pathways, Invited Engagement → Agency

With clear meaning and a values‑based purpose, you can focus on declaring bold aspirations.

Models are great at “more of the same.” They predict the next likely word, the next likely move, the next likely pattern. Humans are the ones who bring the razzle‑dazzle and invite the ‘what if.’ For example, a human might wonder, ‘What if we aimed for something 10x more inclusive, more sustainable, more connected?’

With clear goals, aligned pathways, and invited engagement, you can build agency:

Bold aspirations: We name futures that are worth the stretch, beyond incremental improvement.

Clear pathways: We make the next steps visible and doable, so people know how to move toward that future.

Invited engagement: We ask, we listen, we co‑create—so people feel like participants, not passengers.

Agency shows up when people can say, “I see where we’re going, I know how I can contribute, and my contribution matters.”

No AI system can give that to your team without you first choosing it and designing for it.

Relational Stewardship, Diverse Participation, Learning‑Forward Culture → Resilience

Relational stewardship lays the groundwork for a flywheel - a continuous loop for growth.

Relational stewardship can serve an organization from the outside in or from the inside out.

From the outside in, it’s about creating an ecosystem of complementary partners to better help, grow, serve, and expand the business. These alliances and partnerships complement offerings, extend impact, and help build shared momentum that benefits all parties.

From the inside out, it’s about facilitating inclusion, advocating for diversity, and designing an equitable, proactive, and learning‑forward culture that becomes the heart of an organization’s success.

In short, relational stewardship can be designed to build and expand resilience:

Relational stewardship: You tend to the human fabric—trust, safety, belonging—especially during change.

Proactive expansion: You work together to complement, innovate, collaborate, and expand, with the needs of the customer in mind.

Diverse participation: You deliberately include voices with different backgrounds, roles, and perspectives, so the system doesn’t converge on one narrow view of “normal.”

Learning‑forward culture: You treat mistakes and surprises as raw material for growth, not reasons for shame or blame.

Resilience isn’t about never breaking. It’s about being able to bend, adapt, and come back wiser - together.

In an AI‑dense world, resilience is what lets you keep experimenting without burning your people out or leaving them behind.

Bringing It All Together

If we map it out, the human portfolio looks something like this:

Meaning‑making / Purpose → Goals

Value‑aligned judgment → Purpose (lived, not just stated)

Bold aspirations + clear pathways + invited engagement → Agency

Relational stewardship + diverse participation + learning‑forward culture → Resilience

That’s the work only humans can do.

Not to out‑compute the machines, but to keep us anchored in goals that matter, purpose we can stand behind, agency we can feel, and resilience we can share.

In the end, that’s what’s uniquely human in an AI‑dense world.

Will you choose to be more human, and more hopeful, while fully participating in the opportunities and challenges in a world of AI?

Shameless plug: Hope <=> Goals * Pathways * Agency * Resilience

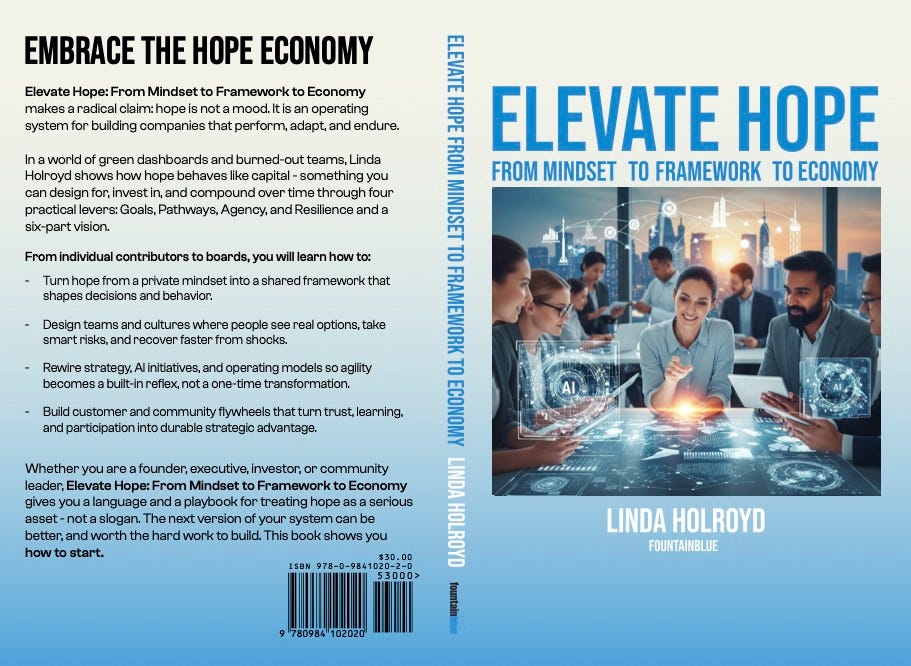

This is FountainBlue’s Hope Framework, which I unpack in Elevate Hope: From Mindset to Framework to Economy, scheduled for release on February 14, 2026.

Hope stops being a feeling and becomes a practical multiplier when clear goals, viable pathways, real agency, and shared resilience all reinforce each other.