January was a blur with its new‑year gatherings, reconnections with partners and clients after the winter break, and deep dives into my next book, Elevate Hope: From Mindset to Framework to Economy.

I have always had a complicated relationship with feedback. I welcome it, yet the perfectionist in me only wants to hear the ‘atta‑girls’. I embrace technology and its flexibility, the way it lets the right people collaborate, but still feel a twinge of frustration when a tool does exactly what I asked for instead of what I meant for it to do!

I am proud to know so many people with bigger brains and bigger hearts than my own, willing to step in enthusiastically with their input, wisdom, and ideas. And I can only blame myself when that generous – and sometimes conflicting – feedback turns into 20 percent more work.

All of this is to say thank you to the dozens of “editors” who have stepped in and stepped up to shape my upcoming book. You have made the book at least 40 percent better, and you have stretched me even more than the manuscript.

In this month’s newsletter, you will find:

an article on Humans Over the Loop - The Head, Heart, and Hands of Leadership in the Age of AI,

an article on Humans In the Loop - Management and Execution with AI at Your Side,

an excerpt from the Author’s Note of my upcoming Hope Economy book, due for release in mid‑February,

Chapter Three from Hope in an Age of Disillusionment, and

information about FountainBlue’s Hope Toolkit Companion web app, based on the 125 tools from the Hope in an Age of Disillusionment workbook.

The Head, Heart, and Hands of Leadership in the Age of AI

Artificial intelligence can now generate options, draft strategies, and optimize operations at a speed no leadership team can match, but it still cannot answer three non-delegable questions: What do we believe in, where are we going, and how will we behave while we get there.

Strategic leadership in the age of AI is about using head, heart, and hands to design the system in which humans and intelligent agents operate so those questions are answered on purpose, not by accident.

The ‘Heart’ is the guardrail for how people are treated. The ‘Head’ is the discipline that sets and aligns to a strategic purpose. The ‘Hands’ are the commitment to shape execution so it reflects those values and delivers on that strategy. When all three are aligned, AI amplifies performance instead of amplifying risk.

The ‘Heart’ is not sentiment, it is constraint. It defines what will not be traded away, even under pressure and even when automation makes it tempting. As AI moves into hiring, evaluation, scheduling, and customer interactions, the absence of clear guardrails around dignity, fairness, and psychological safety becomes a structural risk, not a soft issue.

Leaders responsible for both people and AI make those guardrails explicit. They state where human review is mandatory, where AI cannot be the sole decision maker, and how data about employees and customers will and will not be used. They tie these rules directly to values, not just compliance. Research on transformation and trust shows that organizations that anchor change in visible values sustain engagement and adapt faster than those that reduce it to tools and cost savings.

The ‘Heart’ also shows up in what happens when AI is wrong. Systems will misclassify, overfit, or surface biased patterns. Leaders who are serious about heart take accountability for those errors, make remediation visible, and ensure that people are not treated as collateral damage of experimentation. Without that, every new AI initiative quietly erodes confidence in leadership.

The ‘Head’ is where purpose, direction, and hard tradeoffs live. It is not enough to ask what AI can do; the strategic question is what you want it to do and what you refuse to let it do, given your purpose. As access to advanced tools becomes ubiquitous, advantage shifts from experimentation to disciplined alignment between AI portfolios and clear strategic objectives.

Leaders in the age of AI use the ‘Head’ to define what they will build and how they will build it. They decide which value pools matter, which risks are acceptable, and what success looks like beyond short term efficiency. That may mean saying no to attractive use cases that conflict with the organization’s long term positioning or with the guardrails set by the ‘Heart’.

It may also mean concentrating investment where AI augments human strengths in judgment, creativity, and complex problem solving rather than simply chasing automation for its own sake.

The ‘Head’ is also about portfolio discipline. Recent research on AI adoption points to a shift away from scattered pilots toward fewer, better governed initiatives with clear ownership, metrics, and exit criteria. Leaders who oversee these transitions demand transparency into how models are trained, how they perform across segments, and how they will be monitored once embedded in operations. Without that discipline, AI becomes an uncontrolled layer that can influence critical decisions in intended and unintended ways, and often without anyone noticing.

The ‘Hands’ are where people, process, and technology are designed so that daily execution reflects the decisions made in the ‘Head’ and the ‘Heart’. In most organizations, this is where the system either holds or breaks.

On the people-side, the ‘Hands’ means selecting and developing leaders who can operate in mixed human and AI teams and who have the resilience and learning agility to adapt as tools and roles change. Research on high-potential candidate identification emphasizes intrinsic qualities such as judgment, collaboration, and eagerness to learn as better predictors of sustained performance than credentials alone. Leaders in the age of AI insist that these criteria shape promotions and key assignments, not just performance conversations.

On the process side, the ‘Hands’ means redesigning workflows so human strengths are preserved and amplified. As a rule of thumb, AI might take on draft generation, pattern recognition, and triage, while humans handle exceptions, value conflicts, and nonstandard opportunities. Processes should include explicit points where humans can challenge or override AI outputs and where learning from those interventions feeds back into both the model and the operating playbook.

On the technology side, the ‘Hands’ are about implementation and discipline. Systems are chosen and configured to respect the values and boundaries already defined, not the other way around. Cross-functional teams test AI in realistic scenarios before scaling up, with legal, risk, HR, and frontline perspectives represented in the room. Leaders in the age-of-AI treat this as core infrastructure, not an optional extra.

When the ‘Head’, the ‘Heart’, and the ‘Hands’ are treated as separate conversations, the seams are exposed. A sharp strategy without values produces speed, but not trust. Lived values without strategic clarity produce intent without impact. Relentless execution without values or strategy just spins in circles.

In the age of AI, strategic leadership means standing over-the-loop and integrating all three. The ‘Heart’ sets non-negotiable guardrails for how people are treated, grounded on core values. The ‘Head’ defines the purpose and boundaries that give AI a framework and direction. The ‘Hands’ ensure that the envisioned structures, roles, people, and systems on the ground actually act, respond, and behave as intended.

AI will continue to take over tasks and increasingly participate in planning and operations. What it will not do is choose your values, articulate your purpose, or own the consequences of your decisions. Those remain human responsibilities. Leaders who understand this and lead with aligned head, heart, and hands will not just survive this transition; they will set the standard for what high performance looks like in an intelligent world.

As you look at your own organization this year, choose one concrete guardrail for the Heart, one strategic boundary for the Head, and one execution change for the Hands- and put all three in place before you green-light your next AI initiative. Use this simple template to make your commitment specific:

Our Heart guardrail: AI will never _____________ without ______________.

Our Head boundary: We will only use AI for _________________________.

Our Hands change: Starting this week, we will _______________________.

Pressure‑test your ideas by emailing them to us or scheduling a 15‑minute call so we can refine them together.

Management and Execution with AI at Your Side

It is clear that humans will run management and execution in the age of AI; the real question is whether they choose to do it by design. AI is already very good at drafting content, summarizing information, analyzing patterns in data, and coordinating routine tasks faster and at a larger scale than any individual manager, but that does not make managers obsolete – it changes the job. The role shifts from being the person who personally does or approves everything to being the person who understands what AI can do, decides where it fits, and uses uniquely human capabilities to steer how work actually gets done.

In practical terms, this means embracing AI as a power tool rather than seeing it as a rival. Day‑to‑day, AI can build first drafts of reports, pull insights from customer feedback, prioritize queues, suggest schedules, and flag anomalies that deserve attention. Instead of competing with AI tools on these tasks, managers can learn how to frame the right questions, review AI outputs critically, and connect them to a context, a purpose, a why, a what‑if.

The mindset shift starts with seeing your own value differently. If a virtual assistant can answer routine questions faster than you can, your value is no longer in being the bottleneck for information. Rather than side-lining automations and efficiencies, managers can adopt a broader perspective on how systems, processes, and technologies can remain safe, clear, efficient, useful, and trustworthy. That may look like translating strategic guardrails into concrete rules for how AI is used, coaching people on when to lean on the tool and when to slow down, and creating psychological safety so anyone on the team can say, “The model is wrong here,” without fear. It is your judgment, not your keystrokes, that becomes the center of gravity.

As AI takes over more analysis and coordination, the human premium moves to skills like influencing, mentoring, and cross‑functional problem solving. Employees who lean into these strengths – and who build basic fluency in how AI works – are more likely to move into emerging positions such as AI adoption lead, human‑AI workflow designer, or people‑data insights manager. The opportunity for managers is to treat every interaction with AI today as practice for leading those more advanced hybrid solutions tomorrow.

AI‑philic leaders frame AI as a way to remove low‑value work and expand human responsibility, not as a replacement for people. They proactively invest in skills like prompt design, interpreting AI output, spotting bias, and knowing when to override, and they redesign roles so AI handles repetitive analysis while humans focus on decisions, relationships, and creative problem solving. Organizations following this path see better outcomes not only in productivity but also in retention, innovation, and customer experience.

Human‑in‑the‑loop management and execution is not about nervously waiting to be replaced by AI. It is about stepping forward as the person accountable for how AI is used and what it produces.

In the end, human‑in‑the‑loop management and execution means that humans remain responsible for designing the workflows, setting the guardrails, interpreting and challenging AI output, and making the final calls that affect customers and colleagues – using AI’s speed and scale to extend, not erase, their own judgment, creativity, and care.

Embrace this reality, and challenge yourself to:

Own the role of “human in charge.” Deliberately define where you – not AI – make the final call on customers, people decisions, and risk, and document those decision points so everyone knows when a human must step in.

Design clear guardrails and workflows. Map where AI is allowed to act, where it only suggests, and where it is blocked, then embed those rules directly into processes and tools so human review and escalation are built in, not ad hoc.

Build your AI fluency and feedback muscle. Learn what your AI tools are good at and where they fail, and create simple feedback loops where you and your team routinely review, correct, and improve AI outputs rather than accepting them at face value.

Reskill around uniquely human strengths. Invest your own development in judgment, communication, coaching, cross‑functional collaboration, and ethical reasoning, while learning just enough technical detail to collaborate effectively with AI and data experts.

Normalize experimentation in a safe “sandbox.” Create low‑stakes spaces and pilot use cases where people can practice working with AI, make mistakes, and refine workflows before they touch customers or critical operations, measuring success by human‑AI collaboration, not just automation rates.

If you choose to be a human who works the AI, pick one of the five actions above and adopt it as a team. Document and share what you tried, what you learned, and what human‑in‑the‑loop leadership looks like for you and your team by clicking the link below.

Excerpt from the Author’s Note from ‘Elevate Hope from Mindset to Framework to Economy’

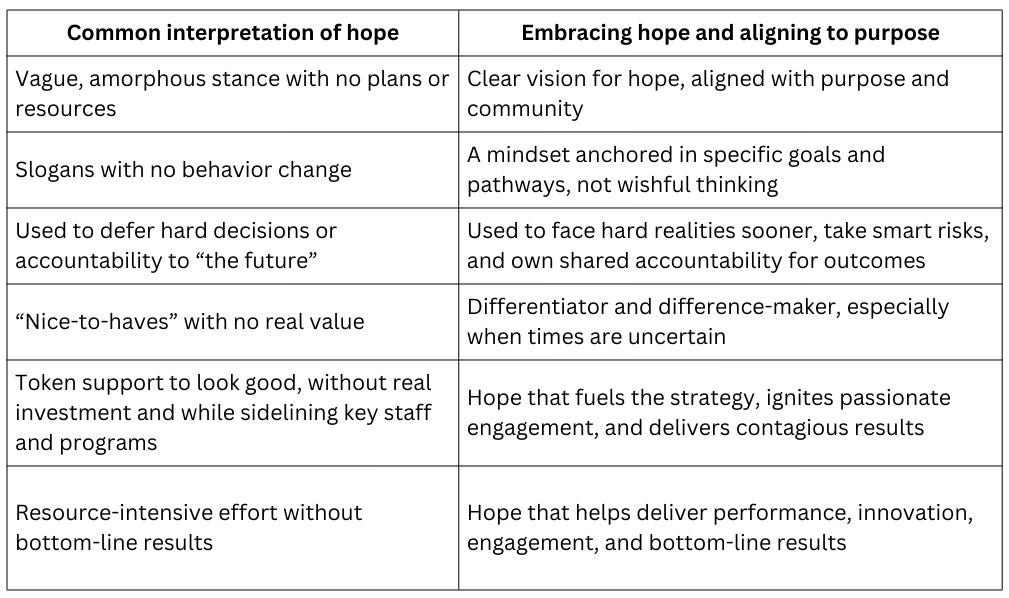

To those who directly or indirectly said “hope is not a strategy,” I quietly and respectfully thought otherwise. My privately fed hope fueled perseverance through pivots, restructurings, and market cycles, helping me shift roles, companies, and technologies with as much grace as I could manage. It stayed in the background, unnamed and unsupported, powerful enough to carry me but not yet visible enough to reshape the systems around me.

In this time of deep discord, we need this message of hope more than ever. This book describes the Hope Mindset as the foundation for the Hope Framework, enabled by FountainBlue’s six‑part vision and fueling the Hope Economy. It weaves together the in-depth research, the inspiring stories, and the practical strategies for leveraging hope to achieve business results at the individual, team, and organizational levels.

This Month’s Hope-in-an-Age-of-Disillusionment Story

Chapter 3 from Part One of FountainBlue’s book is below, showcasing how Hope is the Map.

Chapter 3: The Digital Library (1990–1995)

Hope is the Map.

The map of truth is built by the hand that seeks it.

The Dawn of the Web: (The Times)

The years 1990 through 1995 began with ideological victory following the collapse of the Soviet Union. Yet, the promise of the World Wide Web was immediately betrayed by its chaotic reality. The barrier of state censorship vanished, replaced by new complexity.

Information was physically constrained by archaic systems (microfiche) and logically scattered by rudimentary search tools. This created deep frustration: people knew knowledge existed, but were defeated by the sheer complexity.

Crucially, the corporate world intensified the problem by redirecting budgets and talent toward Y2K preparations, draining resources that could have supported public access and digital innovation. Budgets and talent were siphoned away from innovation and public-facing digital progress, reinforcing the belief that institutions prioritized internal survival over meaningful access to information.

Access to essential knowledge was further constrained by economic and legal barriers — data trapped behind costly paywalls, institutional licenses, and bureaucratic restrictions. In a nation redefining its global role after the Soviet collapse, this deepened the emerging digital divide and intensified the struggle to keep pace with the information age.

The Entrepreneur’s Perspective (The Voice of Ilana)

My Russian Jewish father was a gifted physicist who spent his early career limited by a lack of access to reliable data. When we immigrated to Washington, D.C. in 1990, we believed we were entering a city where information shaped power and policy. It did not take long to learn that even here, essential knowledge was scattered across paywalls, archives, and institutional silos.

While earning my Library and Information Science degree, I discovered how broken the system truly was. Policy documents were inconsistent, legal references conflicted with agency records, and basic data sets lived in formats no one could search. I often spent hours trying to reconcile sources that should have matched but did not. At first I thought I was doing something wrong. I assumed the problem was my inexperience. It took time, frustration, and many failed attempts before I accepted the truth. The problem was not me. It was the system.

I reached out to two friends who understood the landscape better than I did. Dmitri, my former lab partner, was a skilled information architect. Gavin, a political science intern on Capitol Hill, understood how messy policy work could be from inside the machine. Together, we agreed that the real issue was not a lack of information but a lack of verified organization. People were drowning in sources but starving for truth.

Washington, D.C. proved to be the ideal place to test a solution. Every day brought new examples of misaligned data shaping public decisions. Every hallway, agency, and policy conversation highlighted the same problem: no one had a clear path to reliable information. The urgency of the environment sharpened our mission.

We created ClariPath, a system centered on a Dynamic Indexing Engine that authenticated sources, cross‑checked contradictions, and highlighted verified connections to make messy data easier to trust.

We named it ClariPath because its purpose was simple: create a clear path through disconnected information. One of our earliest technical anchors was the “recursive validation loop,” a protocol that forced the engine to re‑verify any data point that came from more than one source, essentially double‑checking any fact that appeared in multiple places. It was slow at first and often produced confusing flags, but it eventually became our signature advantage.

Our first pilots were messy. The system crashed repeatedly when agencies supplied inconsistent metadata. At one point, a senator’s aide called our early dashboard “a very organized disaster.” But even the critics admitted that the structure showed promise, and with every fix, the engine became sharper.

When our clients began to see results, momentum grew. Agencies reported that ClariPath reduced the time required to confirm a legal citation by 36 percent. Policy teams said it cut their research duplication almost in half. A university partner told us that our guardrails prevented students from misquoting legislative data for the first time in years. These results proved that we were not just organizing information. We were restoring access to truth.

When I finally sought angel investment, the response was cold. Several investors shrugged and said, “We already have the World Wide Web,” as if the existence of raw data meant everyone could use it. One even said, “Some people will always get information faster. That’s life.” To me, that was exactly the problem.

I refused to accept a world where access to verified knowledge was treated as a privilege instead of a civic right. So we kept building. And we kept believing that truth, properly organized, could change everything.

The Mentor’s Intervention (The Voice of Judge Elias Thorne)

As the father of a mixed race daughter studying policy, I witnessed the price of systemic disadvantage firsthand. Her passionate recommendation for ClariPath immediately resonated with my conviction that justice requires equal access to verified information.

My reasoning for the partnership was threefold. As a jurist, I sought equal standing for legal arguments. As a civic leader in Washington, D.C., I needed reliable data to inform legislation. And as a father, I wanted to connect my disengaged teenage children with a mission of substance. I believed the emerging generation deserved a barrier-free Web.

I knew investors missed the point that access was unequal. Ilana and I were fighting for justice, but I had to know if she could deliver a fundable business. I presented hypothetical cases drawn from my time on the bench, forcing her to test her filtering guardrails. I needed to see if she would compromise integrity for a powerful client.

Her mind was sharp yet flexible. She resisted my moral probes with the cold logic of an outsider and the rationality of a collaborator. We shared a commitment to truth. In ClariPath, we saw a chance to provide equitable access and business value.

Better Together

After the funding was secured, Judge Thorne asked Ilana to work with his daughter Eliza on an election districting project for her public policy program. It was a high-stress assignment with a tight deadline, and the data was a maze of voter rolls, demographic files, precinct boundaries, and census layers that had never been aligned. The work exposed every flaw in ClariPath’s early system. Some datasets refused to be imported. Others contradicted one another outright.

There were nights when Ilana and Eliza stared at the screen in frustration, wondering if they would get anything to line up at all. But as the recursive validation protocols improved, something remarkable happened. ClariPath began to reconcile the data and surface the inconsistencies that mattered most. Their final report highlighted gaps in district shapes and showed areas where community representation had been unintentionally weakened. The analysis earned Eliza academic recognition and proved that ClariPath could handle real civic problems under real pressure.

The project energized the rest of the Thorne family. Eric and Emily, the younger teens, asked to use ClariPath for their sustainability research assignments. Their work revealed inconsistencies in environmental impact reports from different agencies and caught the attention of one of their teachers. Judge Thorne used that success to secure pilot programs with think tanks and two government agencies working on environmental policy.

The collaboration was not easy. Ilana and the team often had to explain why verification added extra time, and some partners resisted anything that slowed their workflow. At one point, a policy researcher argued that accuracy was “less important than speed,” which led to a tense week of meetings. But each time a pilot surfaced a flaw that would have gone unnoticed, ClariPath earned more credibility. Within three months, participating teams reported a 44 percent reduction in duplicated research and a significant decrease in conflicting citations.

Judge Thorne brought everyone together often, gathering Ilana, her team, and his own children around his dining table for spirited role-playing sessions. He taught them how to anticipate legal pushback, how to negotiate competing interests, and how to stand firm on the principle that verified information protects everyone. These evenings formed a deep camaraderie that shaped the culture of the product itself.

The final breakthrough came when a major university’s policy school approved a long-term pilot. Their research center reported a measurable drop in citation errors and praised ClariPath for bringing order and structure to previously scattered information. Eliza received an internship soon after, and the team celebrated the confirmation that their work had a real impact.

Their collaboration showed that when institutions can trust the information they rely on, they make better decisions. ClariPath was no longer just a tool. It has become a steady partner in a city where accurate information shapes the direction of the nation.

Hope is the Map:

The map of truth is built by the hand that seeks it.

Order YOUR regular or workbook copy of Hope in an Age of Disillusionment or our upcoming book Elevate Hope from Mindset to Framework to Economy by visiting https://www.amazon.com/author/lindaholroyd

Email your purchase receipt for the Hope in an Age of Disillusionment workbook and gain early access to FountainBlue’s Hope Toolkit Companion web app.